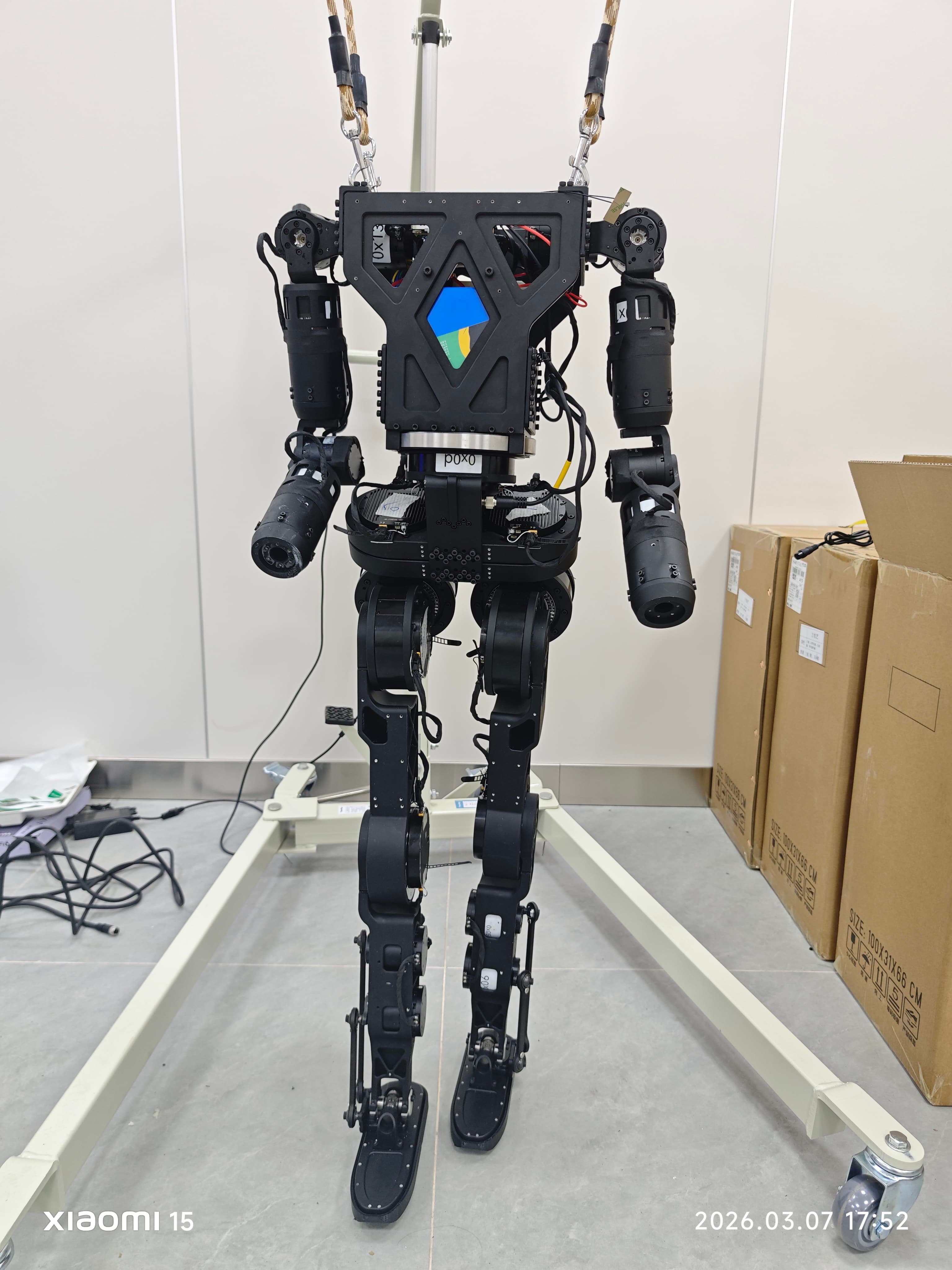

Embodied AI Housework Robot

Built a mobile manipulation system based on Diffusion Policy and VLA (π0.5) for household tasks. Deployed on a 7-DoF arm + differential drive base, with TeleOp data collection using a single smartphone.

Background

Research project at the SenseTime–West China Hospital Joint Lab (Huaxi Jingchuang Medical Technology). The goal: build a mobile manipulation system capable of household tasks like wiping tables and transporting objects, using imitation learning from human demonstrations.

What I Did

Designed the full system around a Jetson edge compute node, integrating a 1080p 180° fisheye wrist camera and a RealSense depth camera for perception. Visual data streams wirelessly to a remote RTX 2080Ti inference server running Diffusion Policy and VLA (π0.5) models. The Jetson receives inferred actions and controls a Puwei P500 differential drive base (via ROS1 Docker) and a Rokae ER3 Pro 7-DoF arm with a Jodell EPG two-finger gripper. Data collection uses smartphone AR (TeleOp) — a single phone captures full 6-DoF pose for teleoperation and demonstration recording.

Challenges

Closing the perception–decision–execution loop over wireless with acceptable latency required careful pipeline optimization. The fisheye lens introduced significant distortion that needed calibration before feeding into the policy network.

Takeaways

Ongoing research. Currently exploring tactile-augmented diffusion policies for contact-rich assembly tasks, combining high-level action generation with high-frequency tactile residual correction.

Gallery